Best AI Coding Tools for Designers in 2026 Figma to Code, v0, Cursor & Codex

A practical decision guide for designers comparing Figma-to-code fidelity, repo workflows, AI app builders, and creative production tools by what you actually need to ship.

⚡ TL;DR

- The big idea: The AI design stack no longer has one center. Some tools make prototypes, some preserve Figma context, some work inside repos, and some operate production tools like Photoshop, Blender, and Premiere.

- The practical map: Claude Design and Figma Make for visual prototypes. Figma MCP for system context. Codex, Claude Code, v0, and Cursor for repo work. Lovable, Bolt, and Replit for app-builder demos. Claude and Adobe connectors for creative production.

- What still matters: The more AI tools can generate, the more valuable design judgment becomes. Designers need to know where the output should land, what needs to stay editable, and when generated work still needs critique.

Short answer: pick by output, not hype

If you are a designer comparing AI coding tools, the real question is not which tool is smartest. It is which tool preserves the design intent: Figma context, code quality, component structure, responsive behavior, and enough control to fix the output.

Need visual prototypes or design directions? Try Claude Design first if you have access; use Figma Make if the work should stay in Figma.

Need Figma-to-code context? Use Figma MCP with Codex, Claude Code, Cursor, or v0.

Need code and Figma to roundtrip? Codex + Figma MCP and Claude Code + Figma plugin are the important workflows.

Need production UI in a repo? Use Codex, Claude Code, v0, or Cursor depending on how much control you want.

Need a founder MVP/app builder? Lovable, Bolt, or Replit can get a working demo started, but expect design cleanup.

Need creative production help? Watch Claude + Adobe, Blender, Autodesk, Canva/Affinity, Ableton, Splice, SketchUp, and Resolume connectors.

Quick comparison

The useful split is workflow fit, not brand hype. Use the tool that matches the problem in front of you.

| Tool | Best for | Not for | Designer use case | Trust level |

|---|---|---|---|---|

| Claude Design | Visual prototypes, slides, one-pagers, and broad design exploration | Durable Figma systems or final production UI | Explore polished directions through conversation, comments, edits, and design-system context | High if you have access; still research preview |

| Figma Make | Functional prototypes and web apps inside the Figma ecosystem | Replacing careful design-system work | Turn existing frames or prompts into interactive experiences without leaving Figma | High for Figma-native prototyping |

| Figma MCP | Figma-to-code context and design-code roundtrip work | Messy files with vague components, tokens, and states | Let agents read, write, and map design context against a maintained Figma system | High when the file is clean |

| Codex | Repo work, pull requests, and Figma-code roundtrips | Designers who cannot review code or product behavior yet | Delegate implementation work, inspect diffs, and move between code and editable Figma layers | High with repo review |

| Claude Code | Larger autonomous code tasks | Designers who cannot evaluate the output yet | Describe a larger task, then review the finished patch | High for fluent reviewers |

| v0 | Repo-connected UI generation and React implementation bridging | Replacing design judgment or product strategy | Turn a specific interface idea into reviewable product UI code | High with code review |

| Cursor | Learning while coding and editing existing files | Hands-off feature delivery without review | Work in the editor and see changes as they happen | High for supervised work |

| Stitch | Fast UI direction generation and wide visual exploration | Final systems, accessibility, or product-specific logic | Generate several directions before refining the strongest one elsewhere | Medium-high for first drafts |

| Lovable | Founder MVPs and full-stack app sketches | Polished design craft or differentiated product UI | Build a rough product so a designer can see what needs fixing | Medium for designers |

| Bolt | Fast browser-based app prototypes | Long-term product design systems | Try a working app idea quickly before investing in stack | Medium for designers |

| Replit Agent | Collaborative app builds, dashboards, and early product demos | Polished interface craft without design review | Use Design Canvas and Agent 4 to explore, plan, build, and deploy in one workspace | Medium for designers |

| Claude + creative connectors | Adobe, Blender, Autodesk, Ableton, Splice, SketchUp, and production workflows | Replacing product UI systems or Figma collaboration | Batch edits, retouching, 3D scene changes, scripting, resizing, and cross-tool handoff | High for repetitive production work |

| Adobe Firefly AI Assistant | Agentic creative work across Photoshop, Illustrator, Premiere, Lightroom, Express, and Firefly | Product UI source-of-truth workflows | Orchestrate multi-step creative edits while keeping Adobe tools and creator control in the loop | High for Adobe-heavy teams |

The AI design tool conversation changed again. A few months ago it was reasonable to ask "Stitch or v0?" like those tools were fighting for the same job. Now that framing is too small. Claude Design, Figma Make, Figma MCP, Codex, Claude Code, v0, Cursor, Lovable, Bolt, Replit, Adobe Firefly, and Claude's creative connectors all solve different problems.

The useful question is no longer "Which AI design tool is best?" The better question is: where does the work need to land? A Figma system? A high-fidelity prototype? A GitHub pull request? A working founder demo? A Photoshop file? A Blender scene? A deck? Pick the tool by destination.

Already made something with one of these tools?

Send the generated screen before you polish it for hours. We'll flag what feels generic, what is working, and what to fix first.

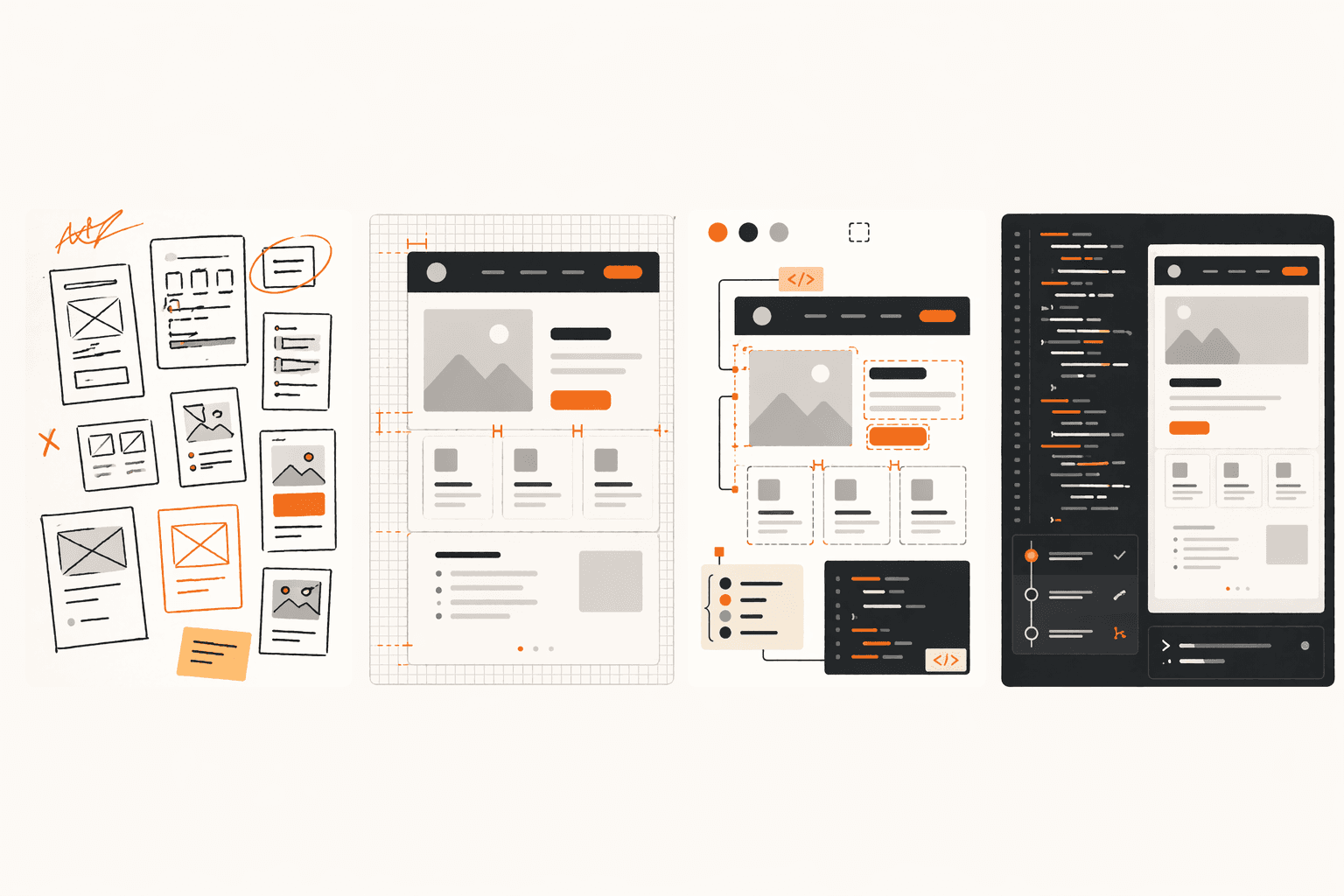

The Workflow Map

Visual prototypes are where Claude Design, Figma Make, and Stitch matter. They help you get from a written idea to something people can react to. The output can look polished, but it still needs product judgment, interaction logic, accessibility, and taste.

Figma systems and code roundtrips are where Figma MCP, Codex, and Claude Code matter. This is the lane for teams that need Figma to remain a shared source of truth while real code changes are happening.

Repo work is where Codex, Claude Code, v0, and Cursor matter. These tools are not just drawing screens. They are editing files, running code, creating diffs, opening pull requests, and making changes that have to survive review.

App builders are where Lovable, Bolt, and Replit matter. They can get a founder or designer to a working demo fast. The danger is mistaking a working demo for a finished product.

Creative production is the new lane most AI design articles miss. Claude's Adobe, Blender, Autodesk, Ableton, Splice, Affinity, SketchUp, and Resolume connectors are not about replacing Figma. They are about operating inside the production tools creative people already use.

Claude Design, Figma Make, and Stitch for visual prototypes

Claude Design is the new tool to take seriously first. Anthropic launched it on April 17, 2026 as a research preview for Pro, Max, Team, and Enterprise users. It creates designs, prototypes, slides, one-pagers, and other visual work, then lets you refine through conversation, inline comments, direct edits, and generated controls. The important detail is that Claude Design can also apply a team design system when it has access. That makes it more interesting than a generic prompt-to-screen generator.

Figma Make is the obvious choice when the work should stay in Figma. Figma describes it as a prompt-to-app tool for functional prototypes, web apps, and interactive UI. It can start from a prompt or from existing Figma designs, preserve structure and metadata, add interactions and data, and give you a code editor when you need more control. For a designer already working in Figma, that matters more than novelty.

Stitch still deserves a place. Google's March 2026 Stitch update rebuilt it around an AI-native canvas, voice interaction, a design agent, and fast multi-screen UI exploration. It is good for widening the option set. It is no longer the default recommendation for every designer.

Use Claude Design when

You need a polished visual prototype, deck, one-pager, or explorable product direction quickly. It is especially interesting when you can feed it brand or design-system context.

Use Figma Make when

The prototype should remain close to Figma, existing frames, collaborative review, or a Figma-native design system.

Use Stitch when

You want fast, wide UI exploration and do not need the first draft to be your final system of record.

These tools make first drafts cheaper. They do not remove the need for critique. Expect generic hierarchy, thin interaction logic, awkward states, and visual sameness unless a designer pushes the work past the first acceptable version.

Figma MCP, Codex, and Claude Code for design-code roundtrips

Figma's role is changing. It is still a design canvas, but the more important 2026 role is structured context. Figma's own framing is now code and canvas: product work can start in a prompt, a terminal, a Figma file, a rendered browser state, or a sketch, then move between those surfaces.

The new handoff is not one-way. The useful workflow is not just "designer makes Figma, developer writes code." It is Figma to code, code back to editable Figma layers, and then back into implementation when the design improves.

OpenAI and Figma's Codex integration makes that shift explicit. Codex can use Figma Design, Figma Make, or FigJam context to implement UI, and can also turn rendered UI from code into editable Figma designs. That is more relevant to designers than a generic coding-agent comparison because it softens the boundary between design exploration and implementation.

Claude Code has a similar lane through Figma's plugin and MCP setup. Figma's Claude Code docs recommend the remote Figma MCP server for most users and package MCP settings plus Agent Skills through the Figma plugin. In practice, that means Claude Code can pull design context, tokens, layout details, variables, and Code Connect mappings when implementing work.

The catch is simple: Figma MCP amplifies your system quality. Clean components, variables, tokens, semantic names, explicit states, and Code Connect mappings make agents better. A messy file does not magically become a product-ready interface because an agent can read it. It just produces messy implementation faster.

This is the article that should split next

Figma MCP, Codex, and Claude Code deserve a narrower design-to-code guide. The hub answer is: use this lane when design needs to stay editable, code needs to stay reviewable, and neither side can be treated as a disposable export.

Codex, Claude Code, v0, and Cursor for repo work

This is where tool choice stops being a pure design decision. If the output lands in a repo, you need to care about diffs, environment setup, tests, product behavior, and whether you can review the result.

Codex deserves more weight than the old version of this article gave it. OpenAI describes Codex as a coding agent that can read, edit, and run code, work in cloud environments, and create pull requests. It can also run in the editor, terminal, cloud, web, and desktop app workflows. For designers working close to engineering, the value is not that Codex "codes." The value is that you can delegate scoped implementation tasks and review actual product changes.

Claude Code is the strongest fit when you want an agent to read a codebase, make multi-step changes, run checks, and return a patch. The Figma plugin makes it especially relevant for designers implementing from real design files, but it still requires someone who can judge whether the final behavior and code are acceptable.

v0 is still the cleanest tool for repo-connected UI generation and front-end implementation bridging. Vercel rebuilt it around GitHub import, sandboxed repo work, environment-aware previews, and a Git panel. It is not magic production quality. It is good when you can describe a specific interface and review the resulting React code.

Cursor remains the best learning and supervised-editing tool in this group. If you want to see changes happen in the editor and understand what the agent is doing, Cursor is still easier to learn from than a fully delegated cloud-agent workflow.

Pick by review style

Want supervised editing? Cursor. Want a specific UI generated in a repo-connected environment? v0. Want larger autonomous repo tasks? Codex or Claude Code. Want Figma and code to keep talking to each other? Codex + Figma MCP or Claude Code + Figma plugin.

Lovable, Bolt, and Replit for app builders

Lovable and Bolt still belong in the app-builder lane: fast, useful, and often generic. They are good for getting a working thing in front of people. They are not proof that the product is designed well.

Replit now deserves a spot here. Replit Agent 4 added a more creative build workflow: apps, mobile apps, dashboards, AI tools, a Design Canvas before committing to code, planning, parallel tasks, and tool connections. For founders and solo builders, that is a serious app-builder story.

For designers evaluating these tools, the question is not "Can it build?" It can. The question is whether you can identify what the builder skipped: empty states, account flows, layout resilience, hierarchy, accessibility, brand voice, pricing clarity, onboarding, and the places real users will get stuck.

Adobe, Blender, and creative production tools

This is the part most product-design roundups miss. On April 28, 2026, Anthropic announced Claude for Creative Work: connectors that let Claude work alongside creative tools instead of only generating standalone artifacts.

The Adobe connector is the headline for visual designers. Anthropic says it draws from 50+ Creative Cloud tools, including Photoshop, Premiere, Express, and more. Adobe's own Firefly AI Assistant is moving the same direction: a conversational agent that orchestrates multi-step workflows across Firefly, Photoshop, Premiere, Lightroom, Express, Illustrator, and other Adobe apps.

Blender is the other signal worth watching. Anthropic says Blender's connector uses the Python API so Claude can analyze scenes, debug setups, batch-apply changes, and create tools inside Blender. That matters for 3D, product renders, motion, architectural visualization, game assets, and designers who touch spatial or cinematic workflows.

This lane is not a Figma replacement. It is production assistance inside the tools creative people already use. For many readers, that may be more useful than another prompt-to-landing-page tool.

Use this lane for

Batch image changes, resizing campaign assets, retouching, Illustrator/Photoshop production, Premiere and Express workflows, Blender scene changes, SketchUp concepts, Autodesk Fusion edits, Ableton help, Splice sample search, and cross-tool file cleanup.

The Taste Argument

There's a common read of the AI tool landscape that says AI is making design easier, faster, and therefore the bar is lowering. That's backward.

AI made competent-looking output cheap. That raised the baseline, not lowered the ceiling. When anyone can produce a clean-looking landing page in ten minutes, clean-looking stops being the thing that gets your work noticed.

Taste — the judgment about what's worth making, what the specific product needs, what makes this interface different from every other AI-generated interface — becomes more valuable, not less.

The tools are converging on the same patterns because they're trained on overlapping data. Shadcn-styled components everywhere. The same card grids, the same dashboard layouts, the same hero sections. If your work looks like it could have come out of any of these tools, it probably did — or it reads that way to anyone paying attention.

The designers who win in this landscape are the ones who use AI tools to get to a competent baseline fast and then apply taste, intent, and product judgment to everything after that. The tools produce the first 70%. The last 30% is still a human job, and it's the part that actually differentiates your work.

Where to Start

Specific prescriptions by designer type:

If you're a design student or early junior

Learn Figma first because the component model, constraints, variables, and collaboration habits still matter. Then use Claude Design, Figma Make, or Stitch to explore faster. Start using Cursor on small personal projects to build coding intuition. Before any tool matters, browser fluency matters more. You need to know how interfaces behave before you can judge AI output.

If you're mid-level and learning to ship code

Cursor is still the right starting point if you want to see what is changing. Add v0 when you want specific UI turned into reviewable React. Add Codex or Claude Code when you can review larger diffs. Pair all of that with Figma MCP if your design system needs to inform the code. This is where the designers-shipping-code path actually opens up.

If you're senior and shipping with a team

Your investment is in infrastructure, not novelty. Clean up your Figma tokens and components so MCP reads them coherently. Decide whether Codex, Claude Code, Cursor, or v0 is the right repo workflow for your team. Use Figma Make or Claude Design for exploration, but keep Figma as the place where decisions become system context. The payoff comes from getting the whole team producing higher-quality AI output through better shared systems.

If you're a non-technical founder

Lovable, Bolt, or Replit are the right call for fast MVPs. Use them to learn whether the idea has legs. When the product gets real users and starts to matter, bring design craft in — whether by hiring, by using a product critique workflow, or both.

If you're transitioning toward design engineering

Practice the same small product surface through different destinations: Claude Design for directions, Figma Make for an interactive prototype, v0 for UI code, Codex or Claude Code for repo changes, and Figma MCP for design-code context. The meaningful skill is knowing what each tool is allowed to decide. That's the design engineer skill in practice.

If you do creative production work

Watch Claude's creative connectors and Adobe Firefly AI Assistant closely. This is the lane for Photoshop, Illustrator, Premiere, Lightroom, Firefly, Blender, SketchUp, Autodesk Fusion, Ableton, Splice, and production work that gets repetitive. It will not replace taste, but it can remove a lot of tool friction.

Everything You Need to Know

Quick answers to help you get started

One more thing

AI raised the baseline of what anyone can produce. That means the gap between "AI-generated competent" and "actually considered and shipped well" is where design work now lives. The tools are the easy part. The harder skill is looking at AI output and knowing what's wrong with it — specifically, actionably, and in a way that makes the work better.

That's the skill that underpins every phase of this workflow. And it's what The Crit is built around. The tools change every six months. The ability to evaluate, refine, and improve design work — whether it came from a human or an agent — doesn't go out of date.

Share this resource

Written by

Nikki KippleProduct Designer, Builder & Design Instructor

Designer, educator, founder of The Crit. I've spent years teaching interaction design and reviewing hundreds of student portfolios. Good feedback shouldn't require being enrolled in my class — so I built a tool that gives it to everyone. Connect on LinkedIn →

AI can make a screen fast. It still needs taste.

Send one generated screen, URL, or prototype. We'll point out what looks generic, what works, and what to fix before sharing.

Continue Reading

All resources →Get one actionable portfolio tip every week. No fluff.

Short reads you can use on your site. Unsubscribe anytime.